Something that I had noticed a while back that I would receive some output values in my objects that shouldn’t have been there. Properties that should have been Null had values in them, and not just random values, but values that matched some of the other outputted objects. This was somewhat worrisome because I didn’t want my project, PoshRSJob, to have this same problem (which it did have). Duplicating the issue was simple, and actually fixing ended up being simple but it wasn’t something that immediately stood out to me, mostly because I had thought that I tested out the solution already and it didn’t work.

The solution to this also shows that sometimes the simplest of fixes can solve the more annoying or complex problems as well as showing that sometimes it is ok to take a little time away from an issue (as long as it isn’t critical to something) and coming back to it can help refresh your mind so you can come back more focused on the issue.

My demo code below will run a simple script block and outputs an object with a few properties that show the pipeline number (basically just something to supply as an outside variable into the scriptblock), the ThreadID so I know that the runspacepool is behaving by reusing the existing runspaces and lastly a boolean value that is probably the most important value in that it shows whether the pipeline value (the first item in our object) is either an odd or even number. If it isn’t an odd number, then it should be a null value and if it is odd, then a boolean value of $True is used.

I’m determining whether the value is odd or even by using –BAND which is a Bitwise AND statement.

1..10 | ForEach {

[pscustomobject]@{

Number = $_

IsOdd = [bool]($_ -BAND 1)

}

}

You can see that this accurately shows which values are odd and which values are even.

Now in my demo code I will use the same logic but just won’t show False if it is even. The idea is that there should be an alternating Null/True value for each returned object.

#region RunspacePool Demo

$Parameters = @{}

$RunspacePool = [runspacefactory]::CreateRunspacePool(

[System.Management.Automation.Runspaces.InitialSessionState]::CreateDefault()

)

[void]$RunspacePool.SetMaxRunspaces(2)

$RunspacePool.Open()

$jobs = New-Object System.Collections.ArrayList

1..10 | ForEach {

$Parameters.Pipeline = $_

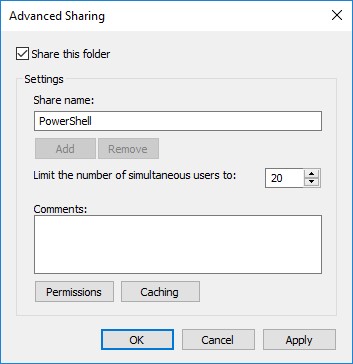

$PowerShell = [powershell]::Create()

$PowerShell.RunspacePool = $RunspacePool

[void]$PowerShell.AddScript({

Param (

$Pipeline

)

If ($Pipeline -BAND 1) {

$Fail = $True

}

$ThreadID = [System.Threading.Thread]::CurrentThread.ManagedThreadId

[pscustomobject]@{

Pipeline = $Pipeline

Thread = $ThreadID

IsOdd = $Fail

}

#Remove-Variable fail

})

[void]$PowerShell.AddParameters($Parameters)

[void]$jobs.Add((

[pscustomobject]@{

PowerShell = $PowerShell

Handle = $PowerShell.BeginInvoke()

}

))

}

While ($jobs.handle.IsCompleted -eq $False) {

Write-Host "." -NoNewline

Start-Sleep -Milliseconds 100

}

## Wait until all jobs completed before running code below

$return = $jobs | ForEach {

$_.powershell.EndInvoke($_.handle)

$_.PowerShell.Dispose()

}

$jobs.clear()

$return

#endregion RunspacePool Demo

Running this code shows that this fails rather impressively by showing all but a single returned object as being Odd with the Pipeline property value.

Yea, this is not a good thing if you are looking for any sort of accuracy in your returned data. I will note that you can mitigate this by calling the Remove-Variable cmdlet against the $Fail variable at the end of your scriptblock, but this isn’t really something that I would expect everyone to do as a solution should really be available within the module itself or at least some way to avoid handling this within the scriptblock.

I looked at many possible options such as changing the runspace thread options thinking that it was a symptom of reusing the same runspace and thinking that it was “just the way it is” and that users would have to remember to remove the variables at the end of the scriptblock execution. Thankfully, that was not the solution that I was going to need to stick with. I ended up stepping away from this issue for a few months because I just couldn’t figure out what was going on and my attempts to fix it ended in failure. Rather than chase my own tail on this, I decided that I would refocus my efforts on something else and then re-engage this at a later date. By doing this, I was able to think better on what I should be looking at as well as testing my different ideas out.

So the big question is: what is your solution to this issue? Well, it comes down to setting a value of $True on the UseLocalScope property when calling the AddScript() method early into the runspace build.

By setting this value, now everything behaves as expected and we no longer have the oddness of values being where they shouldn’t be at.

#region RunspacePool Demo

$Parameters = @{}

$RunspacePool = [runspacefactory]::CreateRunspacePool(

[System.Management.Automation.Runspaces.InitialSessionState]::CreateDefault()

)

[void]$RunspacePool.SetMaxRunspaces(2)

$RunspacePool.Open()

$jobs = New-Object System.Collections.ArrayList

1..10 | ForEach {

$Parameters.Pipeline = $_

$PowerShell = [powershell]::Create()

$PowerShell.RunspacePool = $RunspacePool

[void]$PowerShell.AddScript({

Param (

$Pipeline

)

If ($Pipeline -BAND 1) {

$Fail = $True

}

$ThreadID = [System.Threading.Thread]::CurrentThread.ManagedThreadId

[pscustomobject]@{

Pipeline = $Pipeline

Thread = $ThreadID

Fail = $Fail

}

#Remove-Variable fail

}, $True) #Setting UseLocalScope to $True fixes scope creep with variables in RunspacePool

[void]$PowerShell.AddParameters($Parameters)

[void]$jobs.Add((

[pscustomobject]@{

PowerShell = $PowerShell

Handle = $PowerShell.BeginInvoke()

}

))

}

While ($jobs.handle.IsCompleted -eq $False) {

Write-Host "." -NoNewline

Start-Sleep -Milliseconds 100

}

## Wait until all jobs completed before running code below

$return = $jobs | ForEach {

$_.powershell.EndInvoke($_.handle)

$_.PowerShell.Dispose()

}

$jobs.clear()

$return

#endregion RunspacePool Demo

Success! The $Fail variable doesn’t try to sneak across to another runspace that is running to prevent an inaccurate display of what number is odd when it should be treated as an even number by remaining Null.

Update: If you declare a Global variable within the scriptblock ($Global:Fail=$True), it will be treated as though you never wanted to use the UseLocalScope and will persist throughout all of the subsequent runspaces that you run in your runspacepool. Just another reason why you should always avoid using the Global scope unless absolutely needed!

So in the end, what was a pretty serious issue in how the variables and their values were being preserved across runspacepools ended up being a rather simple fix once I came back from a little time away from troubleshooting.